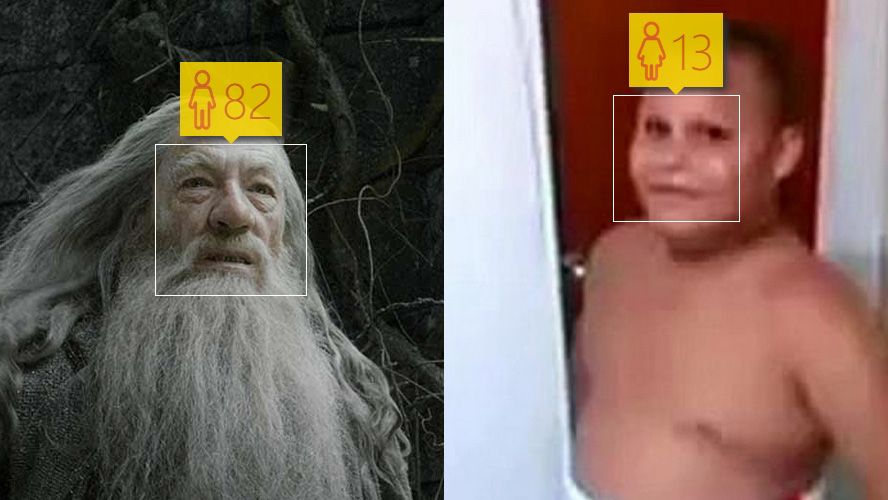

At the recent Build2015 event, Microsoft released its How Old Do I Look webapp, a tool to calculate a person’s age based on a photo. With it, the company aims to test its new face-detection API. The experiment has been a success, and recently the VP of Microsoft confirmed that some 240 million photos have been uploaded by more than 30 million users. We’ve done a bit of digging on how it works, and this is the result.

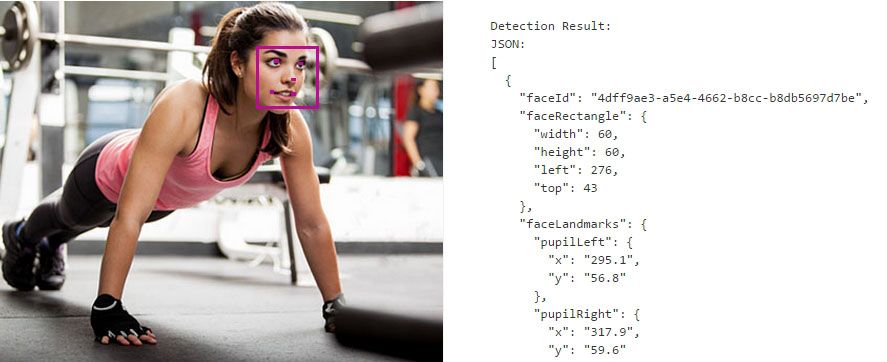

The page can detect the age and gender based on a strange detection process that works based on the location of key parts of the face. The faces are composed of five control points: each eye, the tip of the nose, and paths for each lip are the basic reference points. After finding those points on the photo, they are spatially located with Cartesian coordinates and it attempts to detect a handful of other optional elements of interest that divide your face into up to 27 sections. This registers a specific face profile that, among other things, allows a person appearing in a photo to be auto-detected based on those proportions.

Based on the degree of separation of those points and the analysis of human facial ratios, it’s possible to detect age and gender features. Obviously how well it does this depends on the image resolution and the camera angle with respect to the photo (it can’t detect side profiles), not to mention certain ‘special’ faces that are complicated to detect. Thus it’s very likely that it will say an obese person is younger, as it falls outside the usual ranges of proportions.

From there, the efficiency of the tool will depend on the data stored in the knowledge base associated with the detector. Similar services use a background template to compare the wrinkles and age lines with an external reference. In this case, and according to the indications from Azure and Project Oxford, the process is purely analytical based on the geometry of the control points.

These tools are already used by loads of services like Facebook’s autotagging feature and the camera on Android devices. We’ve still not gotten to the point that we can use our faces as a security-recognition feature like in the movies, but… everything in time.

2

hah

Rewarded As best facebook Liker Get More Than 1000 Likes (Y) Using This Awsome site => Www .NextLiker .COM